Project 1 (Spring 2019)

For full instructions on how to complete the projects for this class, see the full project description. The process as a whole is the same for each of the three projects: what differs is the type of problems your agent will be solving.

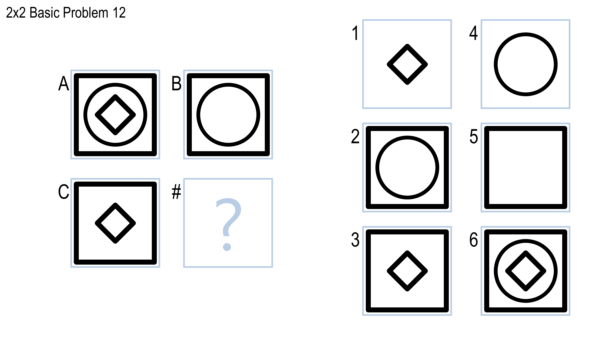

For Project 1, it will be solving Problem Set B, which looks like this:

Your grade will be based on three components: your agent’s performance (30%), your agent’s implementation (20%), and your project reflection (50%).

Performance (30%)

Upon submission, your agent will be tested against Problem Set B’s Basic, Test, Challenge, and Raven’s problems. Only its performance on the Basic and Test problems will impact your grade.

Each set is worth half the performance grade (15% of the project each). For each, you will receive full credit as long as your agent is statistically significantly better than random chance. That means that your agent should answer at least 7 out of 12 Basic problems and 7 out of 12 Test problems correctly to get the full 30%. If your agent is not statistically significantly better than random chance on a set, it will receive no credit for that set.

Good performance on the test itself is not the be-all, end-all goal of the project, but we do believe that building an agent that performs at least adequately is a key part of accomplishing the project’s learning goals.

Make sure to submit with the -error-check flag before submitting to the full autograder to ensure that your code will run error-free. Otherwise, you may lose an attempt.

Performance data will be pulled from the autograder; you do not need to submit anything to Canvas for this.

Implementation (20%)

Unlike other projects in other classes, this project is not solely focused on the final deliverable. Instead, we are explicitly focused on the revision and reflection process. We expect everyone to develop their agent, regularly submit it for testing against the autograder, reflect on the results, and revise their agent to attempt to perform better. You should use the Basic and Challenge problems for rapid feedback and improvement, but you should regularly submit against the autograder as well to see your performance on the Test and Raven’s problems. You have up to 10 attempts against the full autograder.

From everyone, we want to see one of three things:

- Your agent reach perfect performance.

- Multiple submissions where your agent generally is getting better and better.

- Multiple submissions where you are clearly attempting to improve your agent, even if you are not successful.

Note that although those sound somewhat objective, there is some subjectivity here. Submitting your agent five times within a couple hours with slight tweaks to each submission is clearly not as good as submitting on each of five consecutive days making more substantive improvements each time. We will look at the rate of submission, the amount of revision, and the improvement to performance to assign your implementation grade. Note that the project journal will also ask you to write a brief reflection on each individual submission: if you are able to write something substantive and interesting on each submission to the autograder, you are likely on the right track.

Note that novelty also comes up in the implementation policy. We encourage novel solutions, and we encourage you to experiment with unusual approaches. If during your pursuit of a novel solution you decide that you likely will not be able to achieve the desired performance goals from the section above, we’d encourage you to pivot to a more promising approach, but your implementation credit and project journal will be rewarded for taking risks. You should start the project early enough to leave yourself room for such a late pivot if need be.

Implementation data will be pulled from the autograder; you do not need to submit anything to Canvas for this.

Journal (50%)

For the reflection, you will write a personal journal on your process of constructing the agent. This journal should follow this structure, with each section and journal entry clearly marked:

- Introduction: A ~1 page introduction to your overall idea for addressing the problem. Ideally, you’ll write this prior to even beginning implementation.

- Journal Entries: For each submission to the autograder, write a short reflection. In the reflection, you should address the following questions:

- When was this submission sent in?

- What did you change for this version? Why?

- How would you compare this version of the agent to the way you feel you, a human, approach the problems? Does it think similarly to how you think, or differently?

- How did it perform? What problems or types of problems did it do well on? Where did it struggle? How is its efficiency?

- Conclusion: A ~1 page summary of your final agent. In the conclusion, you should address the following questions:

- How would you characterize the overall process of designing your agent? Trial-and-error? Deliberate improvement? Targeting one type of problem at a time?

- How similar do you feel your final agent is to how you, a human, would approach the test? Why or why not?

- What improvements would you make if you had more time and/or more computational resources?

The maximum length of your journal is based on your number of submissions to the autograder. For each submission, you may have 1 page in your reflection, plus 3 additional pages: one for the Introduction, one for the Conclusion, and one to give you a little flexibility. If you include any citations, your references section does not count against your length limit, but any figures or diagrams do.

For example, if you submit to the autograder 5 times, your journal may be up to 8 pages. If you submit to the autograder 10 times, your journal may be up to 13 pages. Note that we only enforce the overall length limit, not a per-section length limit. We expect, for example, that you will probably write more for your first submission than for any subsequent submission. We would expect that for most people, the journal entry for the first submission would be 2-3 pages, and each subsequent one may be less than a page.

Your journal must be written in in JDF format. Any content beyond the length corresponding to your number of submissions will not be considered for a grade.

If you would like to include additional information beyond the length limit, you may include it in clearly-marked appendices. These materials will not be used in grading your assignment, but they may help you get better feedback from your classmates and grader.

Submission Instructions

Your actual code should be submitted directly to the autograder according to the full project directions. Complete your project journal using JDF, then save your submission as a PDF. Journals should be submitted to the corresponding assignment submission page in Canvas. You should submit a single PDF for this assignment. This PDF will be ported over to Peer Feedback for peer review by your classmates. If your assignment involves things (like videos, working prototypes, etc.) that cannot be provided in PDF, you should provide them separately (through OneDrive, Google Drive, Dropbox, etc.) and submit a PDF that links to or otherwise describes how to access that material.

This is an individual assignment. All work you submit should be your own. Make sure to cite any sources you reference, and use quotes and in-line citations to mark any direct quotes.

Late work is not accepted without advanced agreement except in cases of medical or family emergencies. In the case of such an emergency, please contact the Dean of Students.

Grading Information

Your overall project grade will be posted to the gradebook in Canvas, along with a comment indicating the breakdown of scores across these three categories. Students whose agents perform in the top 10 in the class (Basic B + Test B, ties broken by Raven’s and Challenge problems) will receive 5 extra points on their final average.

Peer Review

After submission, your assignment will be ported to Peer Feedback for review by your classmates. Grading is not the primary function of this peer review process; the primary function is simply to give you the opportunity to read and comment on your classmates’ ideas, and receive additional feedback on your own. All grades will come from the graders alone.

You receive 1.5 participation points for completing a peer review by the end of the day Thursday; 1.0 for completing a peer review by the end of the day Sunday; and 0.5 for completing it after Sunday but before the end of the semester. For more details, see the participation policy.